High scalability with Fanout and Fastly

Fanout Cloud is for high scale data push. Fastly is for high scale data pull. Many realtime applications need to work with data that is both pushed and pulled, and thus can benefit from using both of these systems in the same application. Fanout and Fastly can even be connected together!

Using Fanout and Fastly in the same application, independently, is pretty straightforward. For example, at initialization time, past content could be retrieved from Fastly, and Fanout Cloud could provide future pushed updates. What does it mean to connect the two systems together though? Read on to find out.

Proxy chaining

Since Fanout and Fastly both work as reverse proxies, it is possible to have Fanout proxy traffic through Fastly rather than sending it directly to your origin server. This provides some unique benefits:

-

Cached initial data. Fanout lets you build API endpoints that serve both historical and future content, for example an HTTP streaming connection that returns some initial data before switching into push mode. Fastly can provide that initial data, reducing load on your origin server.

-

Cached Fanout instructions. Fanout’s behavior (e.g. transport mode, channels to subscribe to, etc.) is determined by instructions provided in origin server responses, usually in the form of special headers such as

Grip-HoldandGrip-Channel. Fastly can cache these instructions/headers, again reducing load on your origin server. -

High availability. If your origin server goes down, Fastly can serve cached data and instructions to Fanout. This means clients could connect to your API endpoint, receive historical data, and activate a streaming connection, all without needing access to the origin server.

Network flow

Suppose there’s an API endpoint /stream that returns some initial data and then stays open until there is an update to push. With Fanout, this can be implemented by having the origin server respond with instructions:

HTTP/1.1 200 OK

Content-Type: text/plain

Content-Length: 29

Grip-Hold: stream

Grip-Channel: updates

{"data": "current value"}

When Fanout Cloud receives this response from the origin server, it converts it into a streaming response to the client:

HTTP/1.1 200 OK

Content-Type: text/plain

Transfer-Encoding: chunked

Connection: Transfer-Encoding

{"data": "current value"}

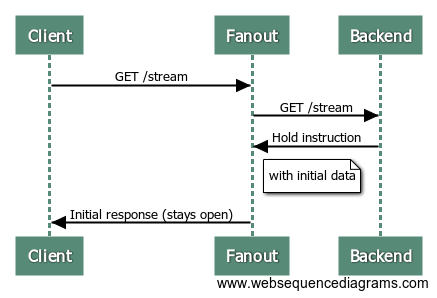

The request between Fanout Cloud and the origin server is now finished, but the request between the client and Fanout Cloud remains open. Here’s a sequence diagram of the process:

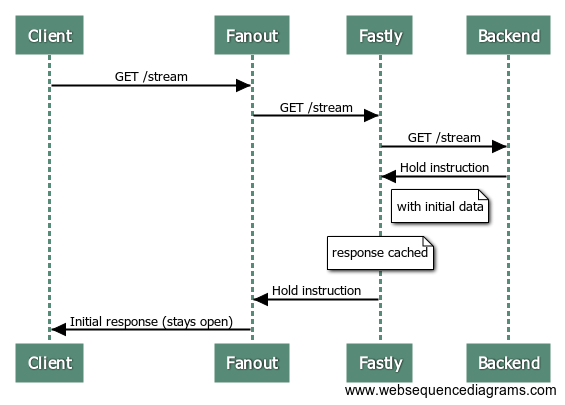

Since the request to the origin server is just a normal short-lived request/response interaction, it can alternatively be served through a caching server such as Fastly. Here’s what the process looks like with Fastly in the mix:

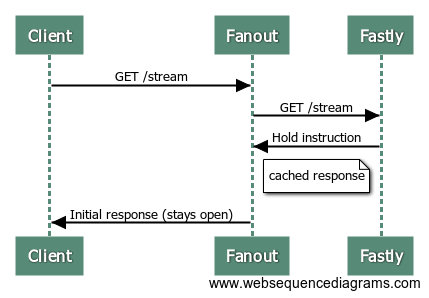

Now, guess what happens when the next client makes a request to the /stream endpoint?

That’s right, the origin server isn’t involved at all! Fastly serves the same response to Fanout Cloud, with those special HTTP headers and initial data, and Fanout Cloud sets up a streaming connection with the client.

Of course, this is only the connection setup. To send updates to connected clients, the data must be published to Fanout Cloud.

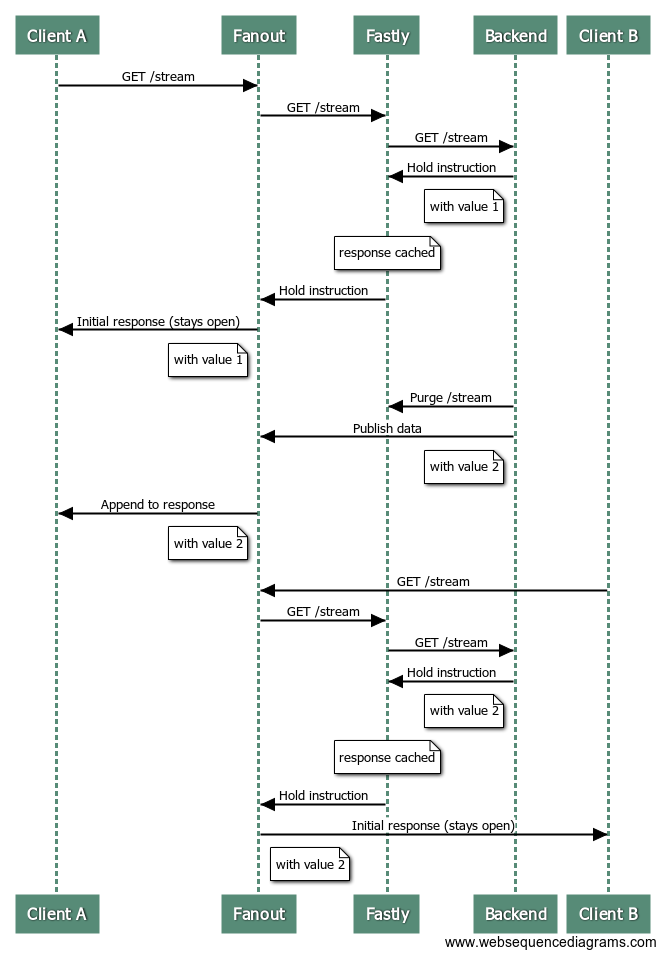

We may also need to purge the Fastly cache, if an event that triggers a publish causes the origin server response to change as well. For example, suppose the “value” that the /stream endpoint serves has been changed. The new value could be published to all current connections, but we’d also want any new connections that arrive afterwards to receive this latest value as well, rather than the older cached value. This can be solved by purging from Fastly and publishing to Fanout Cloud at the same time.

Here’s a (long) sequence diagram of a client connecting, receiving an update, and then another client connecting:

At the end of this sequence, the first and second clients have both received the latest data.

Rate-limiting

One gotcha with purging at the same time as publishing is if your data rate is high it can negate the caching benefit of using Fastly.

The sweet spot is data that is accessed frequently (many new visitors per second), changes infrequently (minutes), and you want changes to be delivered instantly (sub-second). An example could be a live blog. In that case, most requests can be served/handled from cache.

If your data changes multiple times per second (or has the potential to change that fast during peak moments), and you expect frequent access, you really don’t want to be purging your cache multiple times per second. The workaround is to rate-limit your purges. For example, during periods of high throughput, you might purge and publish at a maximum rate of once per second or so. This way the majority of new visitors can be served from cache, and the data will be updated shortly after.

An example

We created a Live Counter Demo to show off this combined Fanout + Fastly architecture. Requests first go to Fanout Cloud, then to Fastly, then to a Django backend server which manages the counter API logic. Whenever a counter is incremented, the Fastly cache is purged and the data is published through Fanout Cloud. The purge and publish process is also rate-limited to maximize caching benefit.

The code for the demo is on GitHub.

Recent posts

-

We've been acquired by Fastly

-

A cloud-native platform for push APIs

-

Vercel and WebSockets

-

Rewriting Pushpin's connection manager in Rust

-

Let's Encrypt for custom domains

• filed under

• filed under